If we have a task at hand where we have to keep picking something new to make something new, it might be interesting if you are cooking something but not when you have to do these things on a large scale repetitively. In case of a service where both the inputs and the outputs keep changing, and you have to deliver the output on a large scale every day, would you keep doing the changes every time in your code, deploy it, test it? Wouldn't it get too frustrating, unproductive, and inaccurate? Also, too much instability right?

Let's see what can we do about it!

How about transformers to the rescue?

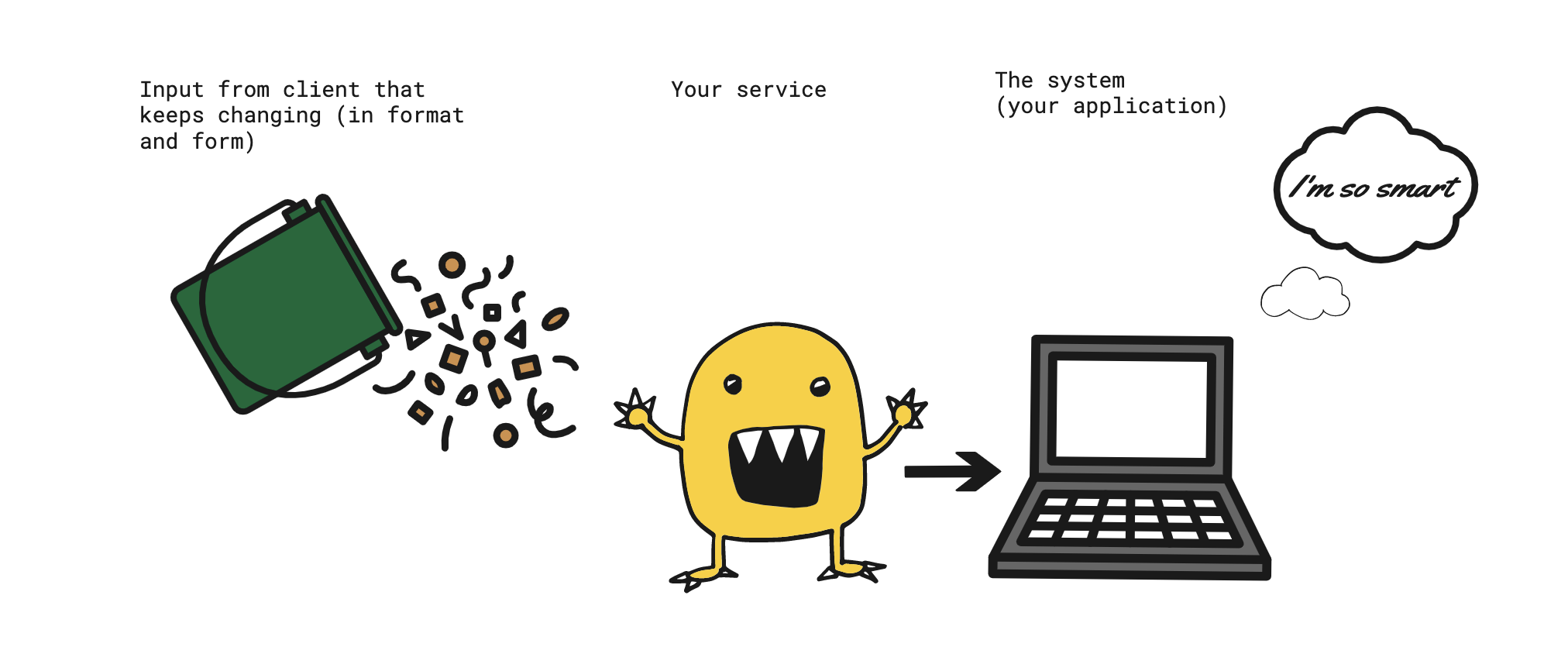

Transformers are these magical monsters in which you can put anything and get what you need, just by providing some minimal instructions. cool right?

In terms of micro services, it could be any service in your system that does the work of taking raw data and converting it into a form that can be understood by your system. We are bundling these set of transformer bots into a system known as the inference service. It can be involved in deducing new things from the raw data required by the system, or converting it into a well-defined format.

Your system might think it's smart, but actually it's the service that's doing the job. It's converting all the trash format data from client to a form that has all the ingredients required by the system to give you the final output, a different output.

Technically, this service acts as an interpreter to your system

Why do we need it?

Any service that is exposed to the client data cannot be static, it has to be dynamic to support all the user requirements, on a large scale and operate smoothly. You cannot expect your customers to send data in well-defined formats or with all the required details filled in. This leads to much longer Onboarding and drawn-out training cycles. And if that's the expectation there's not much value your system adds in the middle.

Let me give you an example:

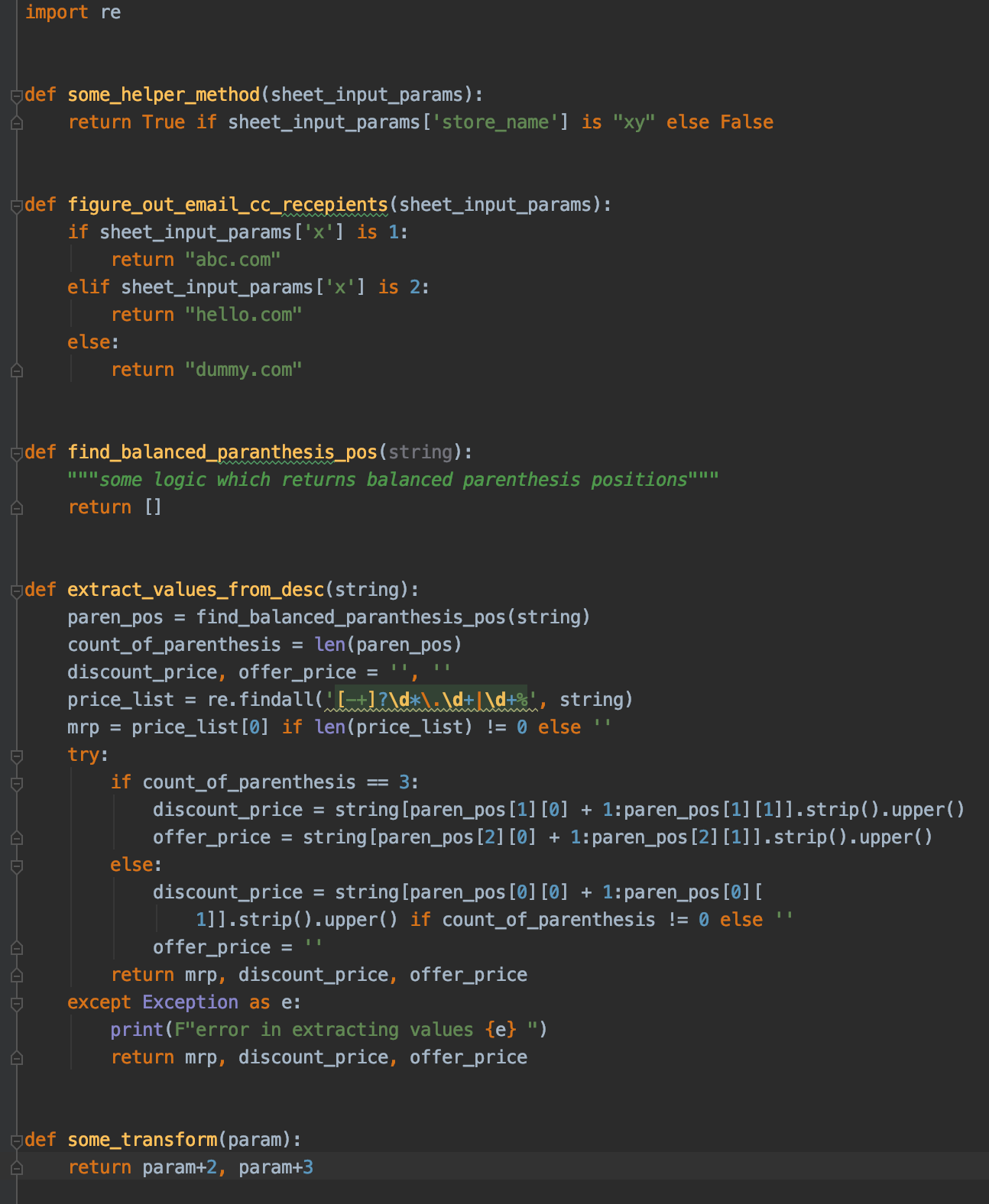

Suppose that you have a system in which a user can upload sheets and get the desired output. Now, the users may upload sheets that have different column names each time but your system understands only a set of column names. If you have hardcoded these mapping and the logic to extract values from input extract complex values from input which again can be specific use case for different columns or clients in your code like this, then it's gonna be Also very arduous, difficult or nearly impossible to scale since for every user, you cannot keep on updating your code. Can you?

In the case above, you cannot manually do a mapping of each input column to the output column name, extract complex values from input which again can be specific use case for different columns or clients, that would be arduous and unscalable.

So, how exactly does this service fit in our system?

On our platform Kubric.io, we have a functionality where you can upload a sheet which has the data that you want in your creatives and then just with the click of a button, you can get all the creatives generated which can be banners, videos, feed cards etc. Now, different clients or within same client there are different teams who would be uploading the sheet and since the conventions used by people can be different, the sheet can be different.

This is where inference comes in, a service which can understand the inputs and map it to the required output column name, extract values from input column and split it into different system understandable columns or lookup values in some db for creating a column etc without any code changes or effort from the system's end. Hence making lives easier.

This is just one example of many use cases where inference can come in handy - configurable and smart middleware which can translate the customer language for the system and is scalable.

The challenge that we faced?

This is not as straight and easy as it looks since the customer requirements would keep changing. The problem is that any day a customer could say that they are changing the format in which they send the data or the entire data that they send. So, how do we make the service as much configurable as possible, that for most of the new features/changes requested, we don't have to do any code changes? Just so, you can just chill and do something more productive.

This was just to give you an overview of the problem and the probable solution. We'll talk about it more technically and see how we solved this problem efficiently, designed our own DSL (domain specific language) in the next part.

References:

Image Ref

1. https://tenor.com/view/womens-day-stay-tuned-updated-gif-16526278

Go to App

Go to App Subscribe

Subscribe